Rewriting the Developer Funnel for an Agentic Era

- Kyle Tyacke

- 6 hours ago

- 11 min read

Kyle Tyacke, Director of Technology

Table of Contents

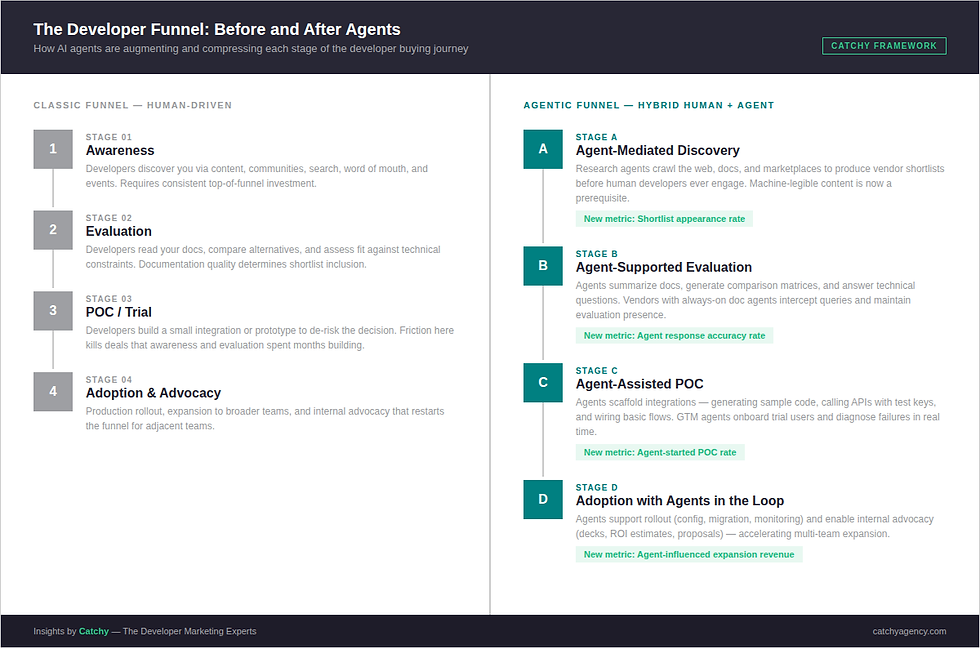

The classic developer funnel (awareness, evaluation, POC, adoption) is still the right mental model. But AI agents have fundamentally changed who does the work at each stage. Discovery is increasingly run by research agents. Evaluation is increasingly assisted by doc agents. POC scaffolding is increasingly initiated by AI tools. The funnel hasn't disappeared; it's become a hybrid human–agent system, and developer marketing strategy needs to catch up.

This matters because the consequences of falling behind are invisible. According to IDC, by 2028 fully 70% of B2B buyers will rely on generative AI to discover, evaluate, and select vendors: before talking to sales, before visiting a portal, before a human developer ever sees your name. If your content isn't machine-legible and your ICP isn't explicit, you may not make the list.

This post maps the agentic developer funnel stage by stage, identifies where the classic playbook breaks, and gives developer marketing leaders the framework and metrics to compete when their first audience is increasingly a machine.

The Classic Developer Funnel: A Baseline Worth Keeping

The developer marketing funnel has four stages. Each one still matters. Understanding how agents are disrupting each stage requires precision about how those stages operate when humans drive them.

Awareness is where developers discover you, through technical content, communities, search, word of mouth, and events. It rewards consistent investment in developer relations and presence in the spaces your target developers already frequent.

Evaluation is where developers read your docs, compare alternatives, and assess fit against their technical constraints. Documentation quality, completeness, and honesty determine whether you survive the shortlist.

POC is where commitment crystallizes. Developers build a small integration or prototype to de-risk their choice. Friction here kills deals that awareness and evaluation spent months building.

Adoption is production rollout and expansion, and when everything goes right, internal advocacy that restarts the funnel for adjacent teams.

"The developer funnel has always been longer and more trust-dependent than a traditional B2B sales cycle — which is exactly why the agentic disruption is so consequential."

The model works. But it was built for a world where humans are the primary actors at every stage. That world is changing fast.

In our experience working with Fortune 100 developer programs, the evaluation and POC stages are where the most value is won or lost. They're also exactly where agents are making their most significant incursions, automating work that used to require developer time and attention.

How Are AI Agents Changing the Developer Buying Journey?

The core disruption isn't that AI makes developers faster. It's that agents are taking over entire funnel stages that previously required a human developer's time and judgment.

Three roles are reshaping the journey.

Agents as Researchers

AI agents are increasingly the first step in enterprise technology evaluation. An engineering organization assessing a new API or platform often deploys an internal research agent to crawl the web, scan documentation, pull GitHub signals, and produce a structured vendor comparison, before any human reviews the options.

Your top-of-funnel content may never be read by a human in the traditional sense. An agent reads it, classifies it, and decides whether you belong on the shortlist. Poorly structured docs, an unclear ICP, or technical capabilities buried in marketing prose can filter you out before a developer ever encounters your product.

IDC's research on AI-mediated buying journeys is direct on this point: brands that succeed in agent-mediated discovery aren't just visible, they're findable in context, with knowledge assets that are structured, machine-readable, and validated by third parties. Relevance is now reinforced by what can be verified and referenced, not simply by what is claimed.

77% of B2B buyers already rely more on AI-driven tools than traditional search engines when making purchasing decisions. (Source: IDC, The New Rules of B2B Buyer Engagement)

Agents as Evaluators

Once a shortlist is set, agents continue doing work that used to require a developer's time. They summarize documentation, generate feature comparison matrices, answer technical constraint questions, and in more advanced deployments, run scripted API tests in sandbox environments.

Vendors who understand this are deploying their own doc agents, always-on technical counterparts that intercept both human and agent queries with structured, accurate answers. This is no longer a differentiator. It's becoming competitive infrastructure.

Agents as GTM Counterparts

On the vendor side, agents are reshaping the inbound funnel too. Chat SDRs, onboarding bots, and documentation assistants handle first points of contact that used to require DevRel staff or sales engineers. The question is no longer just "do we have enough humans for top-of-funnel volume?" It's "are our GTM agents good enough to qualify and nurture developers without losing them?"

According to Microsoft's 2025 Work Trend Index, 46% of business leaders say their companies are already using agents to automate procurement and evaluate products. For more on how agents function as distinct buyer personas in the developer space, see our post: AI Agents Are Your Next Buyer Persona.

"The developer funnel is no longer a one-way slide. It's a human–agent loop where a significant share of early and mid-funnel work is invisible unless you instrument for agent signals."

The Agentic Developer Funnel: What Changes at Each Stage?

The four classic stages don't disappear; they get augmented, compressed, and in some cases partially handed off to agents. Here's what changes at each one, what it means for your program, and what new metrics to track.

Stage A: Agent-Mediated Awareness and Discovery

What changes: Agents mine public content, documentation, marketplaces, and community signals to build vendor shortlists matching a technical spec or ICP. Human developers often see only the output (a ranked comparison), not the raw discovery process that produced it.

What it means for your program: Top-of-funnel content must be machine-readable. Clean HTML structure, explicit feature lists, clear ICP statements, and honest constraint language. Presence in agent-friendly surfaces (API catalogs, protocol registries, marketplace listings, developer tool directories) is now as strategically important as traditional SEO.

For a deeper look at how to build documentation that agents can actually use, see our post: The Future of Documentation Is Machine-First.

New metrics to track:

Appearances in AI-curated vendor shortlists (via marketplace analytics or partner telemetry)

Inclusion rate in agent-generated research reports shared with buying groups

Share of referral traffic from AI-native surfaces vs. traditional search

Stage B: Agent-Supported Technical Evaluation

What changes: Agents assist developers by summarizing docs, generating comparison matrices, and answering technical questions about constraints, performance, and integration paths. Your own doc agent can serve as an always-on sales engineer, available for every granular technical question at every hour.

What it means for your program: Documentation needs consistent structure and Q&A-style sections so agents return accurate answers rather than hallucinating. Comparative content (honest trade-off analyses, "when to choose us vs. X" guides) should be nuanced enough that agents can restate it without losing the substance. Treating your docs as a prompt-ready knowledge base, not just a human-facing reference, is the single highest-leverage documentation investment for this stage.

Key stat: 52% of developers cite lack of documentation as the biggest obstacle to API consumption — and agents amplify this problem rather than solving it. (Source: Postman State of the API Report)

New metrics to track:

Agent-answered question volume and satisfaction scores from your own doc/product agent

Time from first AI-assisted visit to first API call ("time-to-first-value with agent support")

Agent response accuracy rate, a proxy for the quality and structure of your documentation

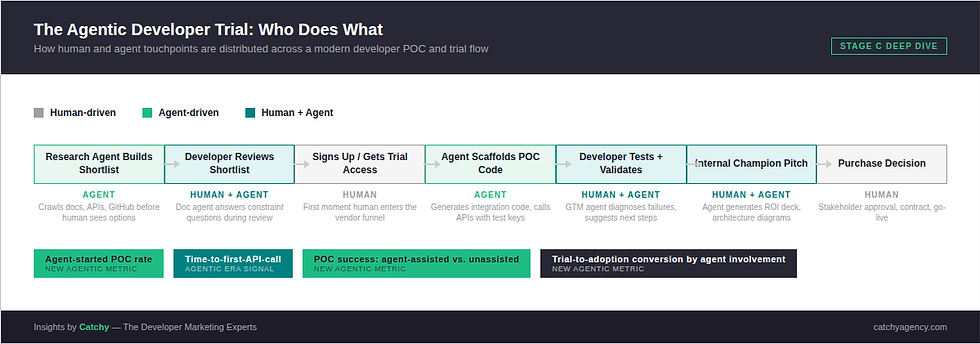

Stage C: Agent-Assisted POC and Trials

What changes: Agents scaffold POCs, generating sample code, calling APIs with test credentials, and wiring basic integration flows based on developer prompts. On the vendor side, GTM agents can onboard trial users, suggest next steps based on behavior, and diagnose integration failures in real time.

What it means for your program: Developer-facing assets (quickstarts, templates, sample applications) should be modular enough for agents to mix and match into tailored POC blueprints. Your trial instrumentation needs to distinguish between agent-driven and human-driven trial behavior so you can measure the lift your own agents are providing, and identify where human intervention still moves the needle.

Key stat: 84% of developers now use or plan to use AI tools in their development process, and among those using AI agents specifically, 70% report reduced time spent on development tasks. (Source: Stack Overflow 2025 Developer Survey)

New metrics to track:

Agent-started POCs: trials where an internal or vendor agent initiated or materially scaffolded the proof of concept

POC success rate segmented by level of agent involvement (doc agent used vs. not used)

Trial-to-adoption conversion rate for agent-assisted trials vs. unassisted

Stage D: Adoption, Rollout, and Advocacy with Agents in the Loop

What changes: Post-POC, agents support production rollout through configuration assistance, migration scripts, and monitoring setup. Developer advocates increasingly lean on agents to produce the internal decks, ROI analyses, and architecture diagrams they need to sell adoption to broader teams.

What it means for your program: Customer success content (runbooks, playbooks, ROI calculators) should be structured for both human reading and agent ingestion. Advocacy enablement can include agent-assisted resources: prompt templates for generating internal proposals, code-mod scripts for migration paths, or architecture diagram generators that champions can customize without starting from scratch.

For context on how enterprise buying committees shape this stage, see our post: The Enterprise Developer Journey Isn't Linear Anymore. It's Orchestrated.

New metrics to track:

Retention and expansion rates segmented by high vs. low agent usage within accounts

Internal advocacy artifacts generated with agent assistance (decks, proposals) and their correlation with multi-team adoption

Support ticket deflection rate attributable to success-stage agent tools

What New Metrics Do Developer Marketers Need?

The stage-specific metrics above map directly to the funnel. Stepping back, there are four metric categories every developer marketing team needs to build into their reporting stack as the agentic era matures.

1. Visibility and Selection Metrics

These measure whether you appear and win in agent-mediated discovery. Appearances in AI shortlists and recommendation engines. Shortlist win rate: When you're included in an agent-generated comparison, how often are you ultimately chosen? This is the agentic era's equivalent of share of voice, and it's almost entirely invisible in traditional analytics.

2. Intent-Based Conversion Signals

Agents generate micro-conversions that traditional funnels don't capture. A developer asking your doc agent for a migration path from a competitor. A request for your security posture details. An agent-generated POC script being downloaded. These are stage-advancement signals worth tracking, and each one indicates a meaningful move forward in the buying journey, regardless of whether a human made the request.

3. Agent Engagement and Effectiveness

If you've deployed GTM agents (doc bots, chat SDRs, onboarding assistants), you need a performance layer for those agents. Volume and quality of developer interactions handled. Resolution rate: how often does the agent successfully support the developer without requiring human escalation? Escalation-to-conversion rate: When agents do hand off to humans, what's the outcome?

These metrics are how you distinguish between GTM agents that accelerate the funnel and GTM agents that merely deflect it.

4. Agent-Mediated Revenue Performance

This is the accountability metric: pipeline and revenue where an AI agent played a documented role. Lead qualification by a chat agent. Meetings booked by an AI SDR. POC scaffolded by a doc agent. When you can attribute these contributions, you can quantify the ROI of your agentic infrastructure and make the case for continued investment.

Tracking CAC and sales cycle duration differentials between agent-influenced journeys and traditional journeys is the clearest signal of whether your agentic strategy is working.

Key stat: IDC projects that 62% of traditional B2B demand generation will be AI-led by 2028, transforming developer engagement into an orchestrated system that continuously adapts based on intent signals. (Source: IDC, Inside the AI-Led Buyer Journey)

What Should Your Developer Marketing Program Do Now?

The developer funnel hasn't been replaced, it's been hybridized. Agents are co-pilots at every stage, and at some stages they're the primary drivers. Developer marketing teams that treat this as a future-state curiosity will find themselves consistently filtered out before a human developer ever evaluates them.

Three priorities are clear and actionable now.

Make your content machine-legible. Structure, clarity, and explicit fit signals matter more than ever. This means clean HTML, Q&A documentation sections, explicit constraint language, and ICP statements that an agent can parse without inference. Our post on The

Future of Documentation Is Machine-First covers some additional details on this topic.

Invest in your own GTM agents. Doc assistants, onboarding bots, and chat SDRs are no longer differentiators, they're table stakes for competing in enterprise developer buying cycles. The vendors winning agent-mediated evaluations are deploying agents that respond accurately to the same questions that external research agents are asking.

Instrument for agent signals. Expand your analytics to capture shortlist appearances, micro-conversions, and agent-interaction data that traditional funnel metrics miss entirely. You can't optimize what you can't see, and the signal that matters most in an agentic funnel is often the one your current stack doesn't log.

Conclusion

The developer funnel is not dead, but the assumption that humans drive every stage of it is. AI agents are now the first audience for your documentation, the first evaluators of your technical capabilities, and increasingly the first ones to interface with your developer experience. Developer marketing teams that adapt their content, GTM tooling, and measurement frameworks to this hybrid human–agent reality will have a structural advantage in the years ahead. Those that don't will find themselves winning the human audience while losing the deal to a competitor whose docs were more legible to the agent that ran the shortlist.

The developer funnel for an agentic era demands a new playbook. The core principles aren't unfamiliar: clarity, structure, technical depth, and meeting developers where they are. The shift is that "where they are" now includes an AI layer that reads, evaluates, and decides before the human ever clicks.

Want to see how these strategies apply to your developer program? Talk to a Catchy strategist →

Frequently Asked Questions

What is an agentic developer funnel?

An agentic developer funnel is a hybrid version of the classic developer marketing funnel — awareness, evaluation, POC, adoption — where AI agents perform significant portions of the discovery, research, comparison, and trial-scaffolding work that humans previously handled. Rather than replacing the funnel, agents compress and mediate several stages, requiring developer marketers to optimize for both human and machine audiences simultaneously.

How do AI agents affect developer tool discovery?

AI agents crawl documentation, API catalogs, marketplaces, GitHub repositories, and community signals to build vendor shortlists on behalf of engineering teams. If your documentation is poorly structured, your ICP is unclear, or your content isn't machine-legible, you may be filtered out of consideration before a human developer ever encounters your product. Visibility in agent-friendly surfaces — not just traditional search — is now a critical developer marketing priority.

What new metrics do developer marketers need for an agentic era?

Four metric categories matter most: visibility and selection metrics (shortlist appearances and win rates in agent-curated comparisons), intent-based conversion signals (micro-conversions like migration path requests or POC script downloads), agent engagement and effectiveness (resolution rates and conversion impact of your own GTM agents), and agent-mediated revenue performance (pipeline and revenue attributable to agent-assisted touchpoints).

How should developer documentation change to support AI agents?

Documentation needs consistent structure, Q&A-style sections, clear feature lists with honest constraints, and comparative content written with enough nuance that agents can restate it accurately. Agents return high-quality answers when documentation is well-structured — and they hallucinate or omit you when it isn't. Treating your docs as a prompt-ready knowledge base, not just a human-facing reference, is the highest-leverage documentation shift for the agentic era.

What does "agent-started POC" mean as a metric?

An agent-started POC is a trial or proof-of-concept where an AI agent — either the developer's own internal tool or a vendor-provided doc or onboarding agent — initiated or materially scaffolded the integration work. Tracking the ratio of agent-started to human-started POCs, and comparing success rates across those cohorts, gives developer marketing teams precise data on the value their agentic infrastructure is delivering at the mid-funnel stage.

How does Catchy help developer marketing teams adapt to agentic buying journeys?

Catchy combines its Developer Signal Hub framework for capturing behavioral and intent signals with its AI Developer Voice methodology for building content systems and GTM agent strategies that work across human and machine audiences. For clients navigating the shift to agentic developer buying, Catchy provides strategic consulting, content architecture, GTM agent strategy, and measurement framework design — grounded in 15+ years of developer program experience across Fortune 100 clients.